Thread: Gtx 1080

-

2016-07-24, 09:28 PM #1881

-

2016-07-25, 07:36 AM #1882

"Smash" is awfully optimistic. http://www.anandtech.com/bench/product/1731 45-70 fps from one title to the next isn't "smashing" by any stretch of the imagination! And you're definitely not going to get the 144hz benefit with a single GTX 1070 that way.

-

2016-07-25, 01:40 PM #1883

-

2016-07-25, 02:04 PM #1884

Look, this utter nonsense it got to stop. Geforce 1080, 1070, 1060 and the new Titan X are all Pascal chips, period.

http://www.anandtech.com/show/10325/...ition-review/2

http://www.anandtech.com/show/10325/...ition-review/4

http://www.anandtech.com/show/10325/...ition-review/9

http://www.anandtech.com/show/10325/...tion-review/10

http://www.anandtech.com/show/10325/...tion-review/11

http://www.anandtech.com/show/10325/...tion-review/12

Just looking at a architecture diagram and saying it's the same chip as Maxwell is not going to give you the full picture. CUDA cores, texture units, PolyMorph Engines, Raster Engines, and ROPs are all identical to Maxwell, however 4th gen delta compression, dynamic scheduling (e.g Async Compute), massively improved preemption capabilities, Simultaneous Multi-Projection, GDDR5X, revamped display controller and a updated video encode/decode block are all new.

I think Anandtech themselves said it best:

Yes, Pascal is broadly similar to Maxwell, but it has numerous key features that do set it apart. Originally Posted by Anandtech

Originally Posted by Anandtech

If you are referring to how the GP102/106/104 are different from the GP100, well that is basically down to them being for two very different markets.

http://www.anandtech.com/show/10325/...ition-review/2

The only thing left out that could benefit the consumer level chip in gaming is HBM2. But having a different memory controller doesn't make it a different chip entirely.

Of course, I doubt I'll get any sort of response to this, I'm still waiting for you to back up your claim of how Time Spy is DX12 11_0 due to Kepler of all things. Or how pre-polaris cards don't even support DX12 12_1.

-

2016-07-25, 03:40 PM #1885

Tell me, how is two different architectures the same architecture, P100 and GP102/104/106. Even noted by AT and 4gamer that the main difference between Maxwell and GP102/104/106 is the Polymorphous engine for even better tessellation fun. The core structure of it is pretty much the same. Sandy Bridge to Ivy Bridge is still Sandy Bridge die shrunk to with some features added onto it, even noted by Intel that it's essentially the same thing but with some other stuff tacked on. Just cause it added some things doesn't make it something completely new and different.

-

2016-07-25, 03:57 PM #1886

All the things before this part are pretty much pointless. It doesn't matter how many new "features" you back in, it doesn't change the hardware layout and doesn't change the uarch. We're not saying GP102/104/106 are simply die-shrunk GM200/204/206. We're saying that they're way closer to Maxwell than to Pascal, and they are want you or not.

About the part that I left in the quote, it doesn't matter. The problem with Time Spy doesn't have anything to do with what you're talking about to begin with.

The problem with Time Spy is that it strives to be a "common ground dx12 benchmark", but it hardly has any compute whatsoever on it. It tries it's best to be the least DX12 as possible not to make it so obvious that Nvidia's current uarch doesn't benefit much from it. Having multi-engine support doesn't mean anything when there isn't any compute workload for it to do its job.

They could've done something that exploits one of the strongest performance increasing features of DX12, but they didn't.

And even then, on top of it, their implementation is simply weird, everything about the benchmark is done in a way that benefits Nvidia.

-

2016-07-25, 05:49 PM #1887

No, they are Pascal, nothing about the chips are Maxwell. You can make up bullshit all you want it doesn't change any of the facts. The stuff left out of the HPC part have got bugger all to do with gaming. Yes Pascal is similar to Maxwell, I even put a quote there to indicate as much, but that doesn't make the consumer level chips not a Pascal part. If you were instead claiming that Pascal is bery similar to Maxwell I would not disagree with you at all, because that is correct, but you're basically stating that the Geforce 1080 is not Pascal which is just silly.

I mean, I'm the one actually linking stuff here to prove my point, all you're doing is basically the equivalent of waving your arms about and going "nu-uh".

So, AMD doesn't gain almost 12% performance gains when Async is enabled? So does that mean Doom and Ashes don't have async either?

About the part that I left in the quote, it doesn't matter. The problem with Time Spy doesn't have anything to do with what you're talking about to begin with.

The problem with Time Spy is that it strives to be a "common ground dx12 benchmark", but it hardly has any compute whatsoever on it. It tries it's best to be the least DX12 as possible not to make it so obvious that Nvidia's current uarch doesn't benefit much from it. Having multi-engine support doesn't mean anything when there isn't any compute workload for it to do its job.

They could've done something that exploits one of the strongest performance increasing features of DX12, but they didn't.

And even then, on top of it, their implementation is simply weird, everything about the benchmark is done in a way that benefits Nvidia.

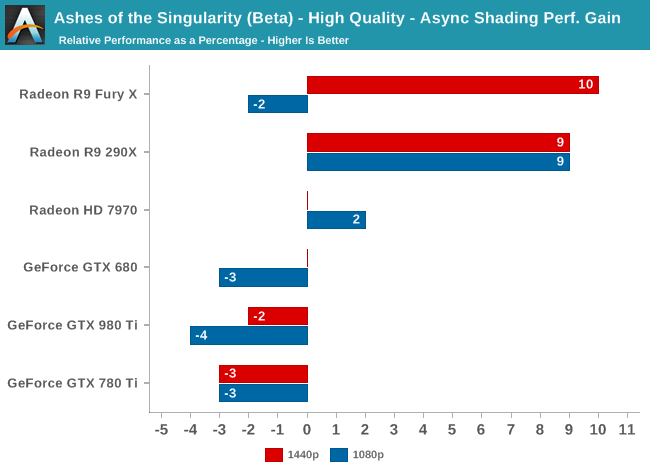

Lets see, Fury X gains roughly 10% with ashes:

But wait, isn't that exactly what Time Spy does?

Damn, guess Ashes is fake DX12 then, with fake Async, surely Doom shows bigger gains (being the only true benchmark that is 100% accurate for both Nvidia and AMD sarcasm )

Aww crap, Doom gets even less then 10% performance boost from Async.

So I'm supposed to believe Time Spy does not do Async compute correctly even though it gets a bigger performance boost for AMD cards then either Ashes or Doom?

Why did AMD not veto it? They have full right to do so:

http://www.futuremark.com/business/b...opment-program

Why does AMD approve of it then? They do it right here: Originally Posted by Futuremark

Originally Posted by Futuremark

http://radeon.com/radeon-wins-3dmark-dx12/

But lets look at some of these claims this forum post you linked make (not sure why I should bother, it's a freaking forum post of all things)

Damn, I guess it's true then, Time Spy is full of shit! Oh wait, it's totally not: Originally Posted by Dubious Forum Post

Originally Posted by Dubious Forum Post

http://www.futuremark.com/pressrelea...dmark-time-spy

Just read through the document, it mentions the word "parallel" quite a number of times and even provides images from GPUview to show how parallel tasks are being executed with Async on and off. They even note how Maxwell is unable to utilize Async compute at all. The word concurrent is used a total of... 0 times. Originally Posted by Futuremark

Originally Posted by Futuremark

Yes I do, and? This image has got nothing to do with Time Spy. The word "Context Switch" is not used once by Futuremark. Originally Posted by Dubious Forum Post

Originally Posted by Dubious Forum Post

This is noted as true by Futuremark themselves, they also indicated that Async Compute has been disabled by Nvidia themselves in the Driver, thus no perfromance loss (or gain either). Originally Posted by Dubious Forum Post

Originally Posted by Dubious Forum Post

Apparently not in this forum post. Originally Posted by Dubious Forum Post

Originally Posted by Dubious Forum Post

So lets present the facts:

1. Time Spy Async on allows AMD cards to gain +12% on performance, in line with similar performance gains from Doom and Ashes.

2. Pascal can do Async just not nearly as well as AMD can, performance gains are this much smaller.

3. Maxwell cannot do Async at all and is disabled in driver, hence zero performance difference vs on and off.

Try to present some actual evidence next time, k?Last edited by Zenny; 2016-07-25 at 05:54 PM.

-

2016-07-25, 05:56 PM #1888

-

2016-07-25, 06:12 PM #1889

That might very well be, but he presents 0 evidence to back up his claims in this case. He is also blatantly going against what the developer themselves have said. Let's be real here if this was really a issue why does AMD promote Time Spy in it's marketing material and not veto it (despite having full rights to do so)?

Why is AMD not raising all hell if this benchmark was Nvidia favored?

-

2016-07-25, 06:15 PM #1890Elemental Lord

- Join Date

- Nov 2011

- Posts

- 8,358

I don't think anyone can argue the fact that nVidia is calling it Pascal, therefore, it is Pascal. No matter what anyone else says. They are theones that get to decide what the names of their own stuff is and they call it Pascal, so it's Pascal.

However, what I think the point trying to be made is, is that they were originally calling something else Pascal, then decided to delay it's release and called this Pascal instead. It's not what they were originally calling Pascal, because that had things that current Pascal does not have. So when someone says it's not Pascal, they are not saying that it's not really Pascal, because again, nVidia calls it pascal so that's what it is, they are saying that it's not the Pascal we were originally told we were going to get. It's Maxwell with some small changes that they started calling Pascal and pushed backthe release of what they were calling Pascal.

-

2016-07-25, 06:24 PM #1891

-

2016-07-25, 06:35 PM #1892

Frankly, Zenny ought to read the many posts by Mahigan. In very plain terms, half the shit Zenny brings up here is already addressed in one form or another by Mahigan.

Most obviously, he explains that Maxwell should always incur a performance penalty with async, because enabling async will still queue up the task and cause the pauses that were the source of stalling to begin with. The code doesn't care about whether or not the feature is on or not, it's still the damn same code. The driver is irrelevant, only the path should matter in whether those "fences" stall the card.

-

2016-07-25, 06:51 PM #1893

It's as if you don't read my posts at all. Maxwell does incur a performance penalty with Async, hence it is disabled in drivers by Nvidia themselves. Thus zero performance penalty because no matter what Time Spy tries to do the driver automatically disables it. But I'll reiterate, if Time Spy was done "incorrectly" why does the following happen:

1. Time Spy gains roughly the same from enabling Async Compute then all other DX12/Vulkan implementations on the market, which is to say around the 10% mark, plus or minus a couple of %.

2. Maxwell gains nothing as it is explicitly disabled in drivers.

3. AMD had input along the entire development process and can veto things it feels does not work.

4. AMD is using this in their own marketing materials.

5. AMD has said nothing on the matter (other then approving it!).

6. Mahigan has zero, let me repeat that ZERO evidence that Time Spy does anything incorrectly. He blatantly says things that are incorrect and has nothing to back him up on that front.

Gah, I've posted actual graphs showing the gain from Async in Time Spy is in line with gains in Ashes and Doom. God at least my posts actually have facts in them.

- - - Updated - - -

Which has got nothing to do with how Time Spy is doing Async Compute.

-

2016-07-25, 06:53 PM #1894

-

2016-07-25, 06:55 PM #1895

well to all who is buying any pascal GPU read this

http://www.tweaktown.com/news/53121/...017/index.html

-

2016-07-25, 06:55 PM #1896

-

2016-07-25, 07:13 PM #1897

No we won't:

http://steamcommunity.com/app/223850...43951719980204

I've bolded the important bit, but please feel free to keep telling me I'm wrong. Originally Posted by Futuremark

Originally Posted by Futuremark

He is wrong. The developer contradicted him on that point. He is free to offer up some proof to prove his claim though. He even makes claim of how Futuremark chose Nvidia's method over AMD, which is once again completely wrong.

- - - Updated - - -

I bought the Geforce 1080 because I needed a high end GPU a month ago, not next year. If AMD bothered to have something that had better performance then the Geforce 1080 I would have bought that, just like I have in the past.

-

2016-07-25, 07:32 PM #1898

... The devs admitted that their implementation is extremely rudimentary for the sake of compatibility. It's being noted there are different ways to approach AC which you don't seem to give an alternative. Ashes for example Pascal remains unchanged performance wise when AC is on. And honestly these synthetics are pointless...

-

2016-07-25, 07:41 PM #1899

Okay, let's just ignore Kollock from Oxide, devs of Ashes of Singularity, saying the following (in regards to DX12 fences):

Overall, he links a few statements from Kollock, said developer. Such as the above from this post. http://www.overclock.net/t/1592431/a...#post_24970191AFAIK the GPU is required to flush before continuing. You can also see why MS made this setup - because now dozens of applications could actually be giving work to the GPU, and theoretically the OS could schedule them all. Windows is more then a game OS, afterall.

And also he's got fun stuff here. http://www.overclock.net/t/1592431/a...#post_24969132

-

2016-07-25, 08:01 PM #1900

Recent Blue Posts

Recent Blue Posts

Recent Forum Posts

Recent Forum Posts

Boosting payments.

Boosting payments. MMO-Champion

MMO-Champion

Reply With Quote

Reply With Quote