Any apparently framerate increase is likely due to either:

- Your system having a bottleneck

- The game being CPU intensive

- The fact that the CPU is multicore and the game being singularly threaded

Ultimately, if you make the right processor choice, it isn't an issue - atleast for gaming. And if you consider the standard person/gamer, limited in their knowledge of such things, voiding their warranty over FPS greed is not something I would advise. If we look at a typical scenario where an i5 would struggle at load (there is one? O_O), I dunno, you are getting 40fps (playable) at average and the grunt is done on the GPU, about 30% of the actual FPS is affected from the CPU being under pressure (i.e. there is much more concern for GPU lag than CPU lag as the CPU processes a lot less). When I say lags of course I don't mean full computer usage, that would be complete system crash in a literal sense, and system grinding to a halt in a more practical sense (when the OS realises it has bitten on more than it can chew and queues tasks).

So if we consider 30% to be a realistic percentage of CPU processing within games, a 40-60% increase of 30% of an average FPS of 40, is 6 assuming a median of the range.

You state a 40-60% complete increase in FPS but I would contest the impossibility of that without a serious system bottleneck as I know that could only be possible in a game where the CPU is handling most of the processing. I am not completely informed on CPU architecture, but I do know that there are different platforms in programmed code:

While I have never investigated personally I would believe there to be a heirarchical and tree priority in place as is controlled by the OS, the graphics API and the application.

Operating System has the highest priority and thats where the core process runs, consider that the "engine platform" for the game. That acts like a library of functions that can be accessed by the CPU and the game itself. So the engine is like "CryEngine" "Unreal Engine" or WoW's inhouse engine, it's not the gamecode itself but it's the platform on what the game runs. This game platform communicates with the operating system and the graphics API within that operating system (DirectX or OpenGL would be the candidates in today's world, unless it is some commercial CAD/CAM/rendering). The graphics API is loaded into the gaming platform so that its library of specific functions are available, and those specific functions make calls to the GPU that renders immediately to the monitor. All of the graphics will be sent this way - and sometimes even physics too on Cuda or PhysX cards.

On the same level as the graphics API is the actual game code - the physics, the events, the procedures and scripted happenings of the game. Likely hard for a regular gamer to visualise it's an NPC, your game character and it's items (as pure code), it's your falling down to earth (but not visually). In a game like WoW, it can be a lot of code, on your OS, it is a lot of code, on a game like Crysis, HL2 etc there is a lot of physics coding. But in comparison to what has to be sent through the graphics API it is the minority.

There will likely be something just before - and inbetween the graphics part of the game platform and the game part of the game platform that is a "tick", something to keep them both in sync so that you don't fall while your character appears to be flying. Anyway with this information you can see that unless your CPU is unable to keep up with your GPU, (to stay in sync) it is unlikely that overclocking your CPU would provide a great increase to FPS (in comparison to a GPU overclock).

Essentially the issue with having an i5 is that it's per core clock is not great for single threaded applications. In a true multi threaded application there would be no bottleneck, no reason to overclock as the gain would likely not even be seen.

I don't know what i5 you have but I assume it was a <3.0ghz stock version. If it was greater than 3.0 then it was a serious system bottleneck as a 3.0ghz i5 would handle practically the market of games available without giving a substantial increase through overclocking without a seriously CPU intensive game like Cryostasis.

((( There are other variables, such as your background applications, what you are multi tasking, what OS you are on etc but essentially what I said above holds true )))

I don't disagree that you got a performance increase, but the increase would have been negligible past the point where the CPU can handle the processes coming at it. So unless your CPU is originally under strain it will get no benefit from the overclock. The higher your FPS is in the game, the more likely that the CPU overclock will not help (except perhaps in situations where it is a massive CPU overclock of 1ghz and your FSB is linked and synced with your RAM). So there was an inherent problem with your system that made your overclock so significant.

tl;dr:

What I posted above is not strictly true in all situations but it is what I have encountered while overclocking many generations of CPU's in the past 8 years. CPU overclocks are beneficial but are not as significant as they are made out to be nowadays. Yesteryear they could be the difference between running Counterstrike and not running Counterstrike, nowadays its like running L4D2 at 120 fps or running it at 125fps.

(GPU overclocks are still VERY significant - but I would always advise buying a pre overclocked GPU unless you are a dedicated overclocker or have a lot of money as GPU overclocks seriously lower the life of the GPU and make it more prone to failure, and a personal overclock voids warranty).

Thread: Building a new GAMING computer

-

2010-10-03, 01:17 PM #21The Lightbringer

- Join Date

- Aug 2010

- Posts

- 3,037

-

2010-10-03, 02:45 PM #22

Can we get a Tldr for the post above mine

Former raider of Accession [US-Stormreaver]

-

2010-10-03, 04:32 PM #23

-

2010-10-03, 06:17 PM #24The Patient

- Join Date

- Aug 2010

- Posts

- 207

^

It was a good read and helpful. A nice change from the fanboyism of some...

-

2010-10-03, 06:22 PM #25

Welcome to World of Warcraft. This game is insanely CPU limited. This sentence alone invalidated the rest of your ramblings.

Overclocking my GPU gave me a gain of 2 fps. Again, welcome to World of Warcraft.

[edit: Check out this post and the one following it. At stock, my system gave me 24fps in Dalaran, 1920x1080, 8x multisample, full ultra graphics. When I was done overclocking, my system gave me 44fps with the same settings and scenario. That's an 83% gain. I never claim to have gained 83% overall, though, as there was nothing horrendously intensive going on. Also, the last post shows my GPU overclocking results. This is called theory vs practice.]

-

2010-10-03, 08:05 PM #26

Im pretty sure that if your not making alot of money you dont want to spend 3 to 5 grand

---------- Post added 2010-10-03 at 03:06 PM ----------

Just a tip if your going to troll dont post at all.

---------- Post added 2010-10-03 at 03:07 PM ----------

Nope i dont plan on overclocking but do you think its possible with my parts

-

2010-10-03, 08:56 PM #27Grunt

- Join Date

- Feb 2010

- Posts

- 23

Don't quote me, I'm just in a rush.

Use that information to determine your build - should be fairly easy.

You don't 'need' SLi for any of the games you listed either, it'll only give a performance boost for when high AA and Shadow detail are enabled.

Stop going off topic. Fuck my life.Last edited by dgen; 2010-10-03 at 09:00 PM.

-

2010-10-03, 09:32 PM #28

-

2010-10-03, 10:48 PM #29The Lightbringer

- Join Date

- Aug 2010

- Posts

- 3,037

Well, if you count Dalaran not being a failure on apart of planning and design then yeah. You would think Blizz learnt with Shattrath but, apparently it took two expansions to understand.

Anyway, considering an environment in WoW at it's most intensive where Dalaran is not concerned (because that is just poor application design as opposed to a true benchmark) it would likely be a 25 man raid environment. In a 25 man raid environment the problem is with the multitude of spell effects.

If spell effects are processed via the CPU then I could see a cause for overclocking it. But increasing my FPS in Dalaran is not my prime concern, If I can raid, quest, BG etc, and pull a decent enough framerate in Dalaran to move around? Then I have accomplished playing the game, as I rarely actaully need to be in Dalaran.

A GPU overclock is substantially more effective at increasing overall gaming FPS. Any counter argument to that based on individual games is moot in that the majority of games benefit from a GPU overclock more than they do a CPU overclock and that includes new games.

If the OP want's to process Dalaran for his AFK'ing then teach him to overclock and have him risk his hardware and warranty. Personally I wouldn't bother with running Dalaran and focus on making it as simple to build as possible, while providing good throughput performance without disregarding individual component or full system warranty.

The PC that dgen mentioned is good, great for novice builders too. I haven't had AMD for a while however so don't trust them, and intel's i5 range and many i7's are reasonably priced, as are their boards.

I'd also add that RAM vendors can be confusing and ultimately are ignoreable if you focus on the timings. If it really peeves you not to have a trustworthy name then aim for Corsair, Mushkin or OCZ. Kingston are arguably the most reliable "cheap" memory vendor.

460 is easily the best bang for buck right now, as I said, get a Gainward GLH or SSC FTW, it's worth the extra £10-20 as the overall FPS increase means your FPS per Pound is much higher. (It also puts the 460 not far beneath a 480 in terms of FPS). The 5850 being better in dgen's post is misleading, the 460 comes on top on occasion, they are approximately the same card, getting the GLH or SSC FTW versions of the 460 will certainly be better than the 5850.

dgen has the right PSU, although I might suggest the 550w for upgrading purposes, especially if in the future you came accross a second 460 nice and cheap. Only worth it if you run 1920x1200 + though.

-

2010-10-03, 11:19 PM #30

Why do you argue that which you don't know anything about?

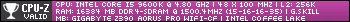

Here is a benchmark of my i5 750 overclocked to 3.8GHz in Ruby Sanctum. Here is a benchmark of my i5 750 stock in Ruby Sanctum. Quick breakdown for you:

Stock: 18fps min, 44fps avg, 93fps max

Overclocked: 29fps min, 51fps avg, 109fps max

That's 61% better minimum framerate, 16% better average framerate, and 17% better maximum framerate. As anyone will tell you, minimum framerate is of highest concern, since that's where you're going to notice any choppiness or stutter. WoW is CPU bound. A better/faster CPU will get you higher minimum framerate. It's been tested time and time again.

Care to continue babbling incoherently?

-

2010-10-04, 01:21 AM #31The Lightbringer

- Join Date

- Aug 2010

- Posts

- 3,037

What I see is you benchmarking two non identical circumstances in a game that can vary IMMENSELY. Comparitively, the average FPS is well within the bounds of being different due to the nature of different circumstances such as poor camera handeling.

Overclocking is not a benefit to throw upon the masses, it is not something I would advise, it is not something I would recommend. It is something I would recommend people avoid. Alongside that, the GPU will in most circumstances in most games trump the CPU in FPS per % overclock. If you can tell me that spell effects, shadows, the new water effects, the higher texture resolutions and such are processed on the CPU then I will agree that the CPU provides a more substantial overclock even in WoW.

The truth is, your GPU maxes the game, in fact it overkills it. My GPU does not, and to upgrade from my 8800gt to something better will give a higher frame rate increase than if I overclocked my CPU(q6600@3ghz w/ ddr2 800 l&s)... hell it would giver a higher framerate increase than if I bought an i7, on a completely new board, with ddr3 ram.

I would probably get about... 10 fps increase from the new CPU as opposed to about 40-60 from the GPU. Comparitively, upgrading from a stock q6600 to a stock i5 750 in a game like Left4Dead2 could see my fps increase by well over 50.

WoW is not CPU bound compared to other games, it just doesn't utilise the power of the CPU effectively. You are asking me to contest whether having a better CPU does not provide an upgrade for some peculiar reason. I am well aware that a better CPU will provide a better framerate. I am telling you that you don't need to overclock and shouldn't advise others that they do - and that GPU will overclock better for all intents and purposes for most games.

-

2010-10-04, 01:32 AM #32

8800gt is fine for WoW atm and I doubt you will see ANY increase in fps by upgrading your GPU. Upgrading your cpu would yield much better performance. You could get that i7 new board and ddr3 ram and still use your 8800. It would be like playing a new game to you. You could also upgrade your GPU and see 0 difference wondering why you wasted your money.

-

2010-10-04, 01:36 AM #33The Lightbringer

- Join Date

- Aug 2010

- Posts

- 3,037

No it would definitely be a performance upgrade, I assure you and would put money on it. I don't turn graphics settings down to benefit my CPU. I am certain I could find this on the web somewhere, someone must have done test already... brb

That didn't take long:

10 fps minimum gained from q6600 to i5 750 stocks and 1 fps extra on top of the i5 for having the nearest i7.

ANNNND finally the GPU, it doesn't have the 8800gt but it has the 3870 which is the equivalent in ATI, it also has the 8800gtx. It doesn't have a 460 but that's about the equivalent of a 285 (the 460 SSC FTW that I recommended would be marginally better). I didn't think my guesstimates would fall so accurately.

-

2010-10-04, 01:37 AM #34

Thank you. Maybe more people pointing this out will result in Sackman pulling his head out of the sand. I provide information we've discussed in this forum for months, personal accounts, and benchmarks. Sackman cries "nuh uh!".

Remember, trolling (including repeatedly and purposely providing false/incorrect information) is a bannable offense. In any other forum, wrong information costs someone time. In this forum, wrong information can potentially cost someone hundreds of dollars.

-

2010-10-04, 01:45 AM #35

Well I recently built this PC in my sig. I had imported a 9800 gt from my old build which was Athlon 6400 4 gigs ram iirc. Now on my athlon WoW was playable but it sucked fps was bad couldn't crank up graphics or spell detail or I would lag in 25's (sub 25 fps down to 5 fps in heavy aoe). I have the SAME card just upgraded mobo, ram, cpu etc. but SAME card. Everything is cranked with exception of shadows. Same card but light and day performance.

-

2010-10-04, 01:48 AM #36The Lightbringer

- Join Date

- Aug 2010

- Posts

- 3,037

I am providing accurate advice to the person - and safe advice, advising him how to SAVE money and potentially voiding warranty unnecessarily. You are advising him to take silly risks. I am surprised you have moderator status, but am unsurprised in lue that you would flaunt it as if to gain any influence in what isn't an argument.

I will leave it be for now as teaching you benefits me in no way whatsoever, I just hope the OP listens to my advice and avoids putting themself in a position that could cost them a great amount of time and money because they wanted an extra few FPS.

---------- Post added 2010-10-04 at 02:53 AM ----------

You upgraded from an athlon 6400 with 4 gigs of ddr2 to an i7 930 with 4 gigs of ddr3. I assume the ddr2 with the 6400 was 667?.

The architecture alone would provide a substantial upgrade. We are not arguing whether an upgrade occurs, I am stating that:

1. Overclocks are not necessary and constantly advising them on computer users who are not experienced enough with computers to even choose their own parts is stupid. Always get them the best they can - stock.

2. GPU's provide a greater benefit when overclocking compared to the CPU for most games.

~Sack out.

-

2010-10-04, 01:53 AM #37

No one said he had to overclock. This debate started because I asked him if he planned to OC his CPu because originally he had TIM and a heat sink listed in his parts list. I asked if he was going to OC since he was considering buying them. Then someone chimed in that he should buy a 6 core thus sparking the 6 core vs 4 core OC potentials. Then you came in the middle of this thread telling people not to OC as if someone told them they HAd to. So you saying Cilraaz told someone they had to OC their machine is way out of line IMO. Oc'ing your machine is your choice and with any mods come risks. Make sure you weigh the risk vs reward and make an intelligent choice.

Last edited by Dethh; 2010-10-04 at 01:56 AM.

-

2010-10-04, 01:56 AM #38

My initial input into this thread was to not get a Phenom II x6. Someone else mentioned that theirs is fine when overclocked, so I mentioned that an x4 is even better than an x6 (for gaming) when overclocked and that Intel chips beat the pants off of any AMD chip when you're counting overclocking. You then came in and said "You don't need those few FPS that knowing how to do it provides". The rest has been me arguing that it's more than just a few fps, as has been proven repeatedly in this forum.

I never suggested for or against overclocking. I'm only speaking to the benefits of it. I know that not everyone wants to overclock or would feel comfortable doing so. It's each person's individual decision. However, you continually saying that buying a new video card will give these massive framerate gains is blatantly false. That's where my comment regarding trolling comes in. Someone could spend $300 buying a new video card on your suggestion and end up with zero (or near zero) performance increase.

In WoW: CPU increases minimum framerate, while GPU increases maximum framerate. A higher maximum framerate will not save someone from lagging during a 25-man boss. A higher minimum framerate will, and that's been proven repeatedly to be held up by the CPU, not the GPU.

-

2010-10-04, 02:17 AM #39

-

2010-10-04, 04:58 AM #40Titan

- Join Date

- Apr 2009

- Posts

- 14,326

O'rly? So the other parts than GPU actually does make a difference? Who would have thought? After you've written 10 pages of pointless drivel here trying to explain how games are about GPU and not CPU? /gasp

Yes, minimum FPS while raiding in wow depends entirely on raw CPU power. Bit over year ago I changed Athlon x2 4200+ into PhenomII x3 720, of course motherboard and RAMs went also in the generation upgrade. I had bought Radeon 4850 card earlier thinking it would make a difference for WoW like it did for most other games. Well, you know what... It did nothing.

That little CPU/motherboard/RAM upgrade pushed FPS during ToC25hm raid from 10-15 at very low graphics into 20-25 with ultra-except-shadows on 1680x1050 resolution, all without touching GPU at all.

Of course overclocks are not necessary, but overclocks are made extremely easy today, up to the point where you can just click it on inside Windows when you're using multiplier unlocked AMD Black Edition Phenom. A processor which is advertised as being unlocked for overclocking. On correctly built computer you can't break anything with it, and can get free 20-40% speed with few mouseclicks. Or you can opt to not do it.

GPUs indeed provide greatest FPS gain for most games. And it does for WoW too, but only maximum FPS gain. I don't think anybody does give a flying fuck if you turn off vsync and see 150 or 250 fps while on air taxi. It's totally inconsequential for the percieved input lag. What matters is minimum FPS, and for that CPU is always the bottleneck as long as you have either medium range current generation GPU or high end previous generation GPU. You see, WoW is not like most of the games, and GPU plays surprisingly little part in your raid performance.Never going to log into this garbage forum again as long as calling obvious troll obvious troll is the easiest way to get banned.

Trolling should be.

Recent Blue Posts

Recent Blue Posts

Recent Forum Posts

Recent Forum Posts

Every Allied Race should be removed... except two

Every Allied Race should be removed... except two MMO-Champion

MMO-Champion

Reply With Quote

Reply With Quote