-

2012-12-04, 11:46 PM #121

-

2012-12-04, 11:48 PM #122

Oh FFS. You're throwing the term "leakage" around because you saw it on some slide. If you had any clue you would know that "leakage" in that regard is strictly referring to POWER leakage. Just... Stop. You're digging a hole that you clearly don't know how to get out of.

Are you going to turn around and say that the power leakage improvements on Piledriver magically boosted performance over BD by 10-20%? Lol.

ALSO, every single game linked in that benchmark is dual threaded.i7-4770k - GTX 780 Ti - 16GB DDR3 Ripjaws - (2) HyperX 120s / Vertex 3 120

ASRock Extreme3 - Sennheiser Momentums - Xonar DG - EVGA Supernova 650G - Corsair H80i

build pics

-

2012-12-04, 11:49 PM #123Warchief

- Join Date

- Jun 2010

- Posts

- 2,094

"The foundation of the architecture is still the same. It consists of modules, which contains two integer CPU cores that share a floating point core. Each module has 2 MB of L2-cache. The Vishera has four such modules, for a total of eight cores. In the Windows task manager you will see eight virtual cores. There will also be versions in which one or two modules have been disabled, leaving two or four cores active."

http://uk.hardware.info/reviews/3314...s-bulldozer-20

Nothing really changed except fixes.

-

2012-12-04, 11:51 PM #124Epic!

- Join Date

- Oct 2009

- Posts

- 1,749

I've replaced every part of my computer except for the processor and motherboard. If I wanted to upgrade the processor, I'd need to buy a new motherboard anyway, so combining the two doesn't really change much for me. There's plenty of other components to swap out and customize.

-

2012-12-04, 11:52 PM #125

-

2012-12-04, 11:58 PM #126Warchief

- Join Date

- Jun 2010

- Posts

- 2,094

-

2012-12-05, 12:03 AM #127

Sure. The foundation of Nehalem and Ivy Bridge i7s are the same too. 4 dedicated cores with hyperthreading to alleviate load balancing issues. I guess everyone that has upgraded since is just stupid.

You can't cheat by "overclocking" since overclocking literally means going over the stock clocks that the CPU came with. No one is saying the architecture was massively overhauled, it was revised for the better, which incidentally made everything under the i5 3570k pricepoint an obvious win for AMD due to better stock performance and the ability to overclock.

If you want to sit there and apply hypothetical "if such and such processor was downclocked to such and such rate it would be fair" logic, then go for it. But no one cares.Last edited by glo; 2012-12-05 at 12:06 AM.

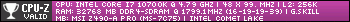

i7-4770k - GTX 780 Ti - 16GB DDR3 Ripjaws - (2) HyperX 120s / Vertex 3 120

ASRock Extreme3 - Sennheiser Momentums - Xonar DG - EVGA Supernova 650G - Corsair H80i

build pics

-

2012-12-05, 12:06 AM #128Warchief

- Join Date

- Jun 2010

- Posts

- 2,094

-

2012-12-05, 12:10 AM #129

This is getting funny. You do realize that AMD "increasing IPC" would mean that they improved performance clock for clock over BD, RIGHT?!?!

IPC = the amount of crap the processor can push out per cycle.

Again, I suggest you stop before digging that hole any deeper. Also, food for thought, AMD and Intel don't push new architectures with every release. They never have and likely never will. There is always at least one revision before a new architecture is introduced. Vishera is a revision, and no one expected AMD to release a new architecture.

Sitting there and bashing on them for doing a revision (and an amazing one at that) is beyond ignorant.Last edited by glo; 2012-12-05 at 12:12 AM.

i7-4770k - GTX 780 Ti - 16GB DDR3 Ripjaws - (2) HyperX 120s / Vertex 3 120

ASRock Extreme3 - Sennheiser Momentums - Xonar DG - EVGA Supernova 650G - Corsair H80i

build pics

-

2012-12-05, 12:26 AM #130Titan

- Join Date

- Oct 2010

- Location

- America's Hat

- Posts

- 14,141

I highly doubt BGA will replace LGA completely, in fact there are only a few markets where this would actually be more cost effective and that would be any systems that are integrated and don't rely purely on performance, like all in one computers, laptops and media centers. The people who continue to pay Intel's bills are the enthusiasts as well as custom build companies like Sager and pre-built manufacturers like Dell and HP. They would be absolutely stupid to enforce BGA as a hardware standard for PC gaming enthusiasts, that whole division of their product line would evaporate in a matter of months, Intel probably would go out of business doing something that stupid. I don't think they are foolish enough to do it either, BGA just might be an alternative for some types of computers while LGA will be for the general PC consumers who build desktops or want to buy customized/pre-built machines.

-

2012-12-05, 12:28 AM #131Warchief

- Join Date

- Jun 2010

- Posts

- 2,094

One thing is sure, I'm never going to read an AMD cpu's article because you already know that it's going to be crap, you could notice this already from my behavior. I just listed one thing which they fixed and you're starting to dig deeper to get in architectures. This isn't now the point.

Get this: FX8350 downclock to i3's freq and put on both platforms cinebench 11.5 with a process affinity realtime and pick the Singlecore option. Clearly the i3 is just going to be the winner.

I was talking all the time about the single thread performance.

>.>

-

2012-12-05, 12:30 AM #132

Haven't been using intel.

I gurantee their sales will drop and other products will take their place.

-

2012-12-05, 12:33 AM #133

So now since you've been thoroughly proven wrong in every point you've made, you're going to resort to hating on AMD because "you already know it's going to be crap".

Nice. I suppose you only got into computing a couple years ago considering AMD has dominated Intel's entire line on numerous occasions. That point is lost on you however, since you refuse to accept correct information. Oh well.

Lastly, no one cares what performance would be like if you underclocked processors. Clock to clock performance between different architectures means nothing, if you had any clue you would know that.i7-4770k - GTX 780 Ti - 16GB DDR3 Ripjaws - (2) HyperX 120s / Vertex 3 120

ASRock Extreme3 - Sennheiser Momentums - Xonar DG - EVGA Supernova 650G - Corsair H80i

build pics

-

2012-12-05, 12:41 AM #134Warchief

- Join Date

- Jun 2010

- Posts

- 2,094

The only thing ive said about amd was just that the architecture was the same and there were some fixes applied such as the leakage and they've improved their ipc because this was the only thing I knew and read before.

I just seen this picture: http://content.hwigroup.net/images/a...ity-SLIDE4.PNG

It isn't the point, the discussion was about the single thread performance all the time. The fx8350 turbo frequency, no idea's how they work out. 4.2GHz on every core or just shared at how many cores being used? If the i3 has unlocked multipliers and match them up and do the same method I just said with cinebench, the i3 will win.

-

2012-12-05, 12:46 AM #135

But the i3 doesn't win because it's locked and is at a lower frequency. Simple as that. Even if it was unlocked, (gaming) performance would still be in step with AMD after both were overclocked a considerable amount.

Considering that, the 6300 would still be a better buy regardless due to its superior multi-threaded performance over a dual core processor.i7-4770k - GTX 780 Ti - 16GB DDR3 Ripjaws - (2) HyperX 120s / Vertex 3 120

ASRock Extreme3 - Sennheiser Momentums - Xonar DG - EVGA Supernova 650G - Corsair H80i

build pics

-

2012-12-05, 12:48 AM #136

Right.

This is not a "Let's take a dump on AMD"-thread.

Mind keeping this at least semi-related to how intel's speculated decision to limit their CPU-mounting is going to affect the market, rather than what CPUs exist today and make up results about them?

-

2012-12-05, 01:10 AM #137

Its pretty simple.

Gaming Enthusiasts love customizing and building their computers in the same way someone else might have a major car hobby and spend tons of money and time putting on new rims, engine modifications, lights, paint etc etc by themselves.

Taking away customization will piss off a lot of these people. Someone else linked a pie chart where like 20% of intel's income was from enthusiast sales in at least 1 way.

I, at the very least, would be willing to take a negligible performance hit in order to keep my "independence" for lack of a better term. Flexibility might be a better word there.

Nearly all games, and even stuff that isn't games, are making a major push to utilizing gpu power compared to cpu power. There are inherent advantages to using gpu like being much larger physically and giving heat a larger surface area to dissipate essentially limiting its power to its power consumption which is not true for cpus.

-

2012-12-05, 01:12 AM #138

You are welcome to keep talking about that.

Talking about how AMD CPUs suck >today< is irrelevant though.

-

2012-12-05, 07:52 AM #139

I dont think Intel can kill the enthusiast market. Just cause cpus are soldered doesn't mean end of customs. I dont get it.

-

2012-12-05, 08:10 AM #140Scarab Lord

- Join Date

- Feb 2011

- Posts

- 4,030

Instead of buying an Extreme4 and a 3570K separately, you'd probably just be buying an Extreme4 3570K. Kind of like how graphics cards are sold board and chip combined.

Recent Blue Posts

Recent Blue Posts

Recent Forum Posts

Recent Forum Posts

New heritage armors (Draenei and Troll) are not acceptable

New heritage armors (Draenei and Troll) are not acceptable MMO-Champion

MMO-Champion

Reply With Quote

Reply With Quote