Obviously DX11 took the place of DX10, but at the time 10.1 was superior and Nvidia made sure to mute 10.1 as much as possible.

The ATI cards that didn't support DX9.0c were junk. I ended up buying a X850 for $100 years ago and ended up putting it into a PC used for a business that was just good enough to put out a display in Windows 7. But in ATI"s defense, HDR was possible without DX9.0a. Half Life 2's HDR proves that. Also, a few games were patched by the community to allow DX9.0c games to be played on DX9.0a cards.It's funny you bring up DX9 though, the ATI 800/850 set couldn't support DX9.0c and HDR and ATI claimed it wasn't really needed, until Oblivion came along and you couldn't enable HDR on those cards.

Is there a problem supporting FL 11 through 12_1? As if Time Spy can only utilize one and only one Feature Level. Kinda odd that Maxwell cards support FL 12, but gain almost nothing from DX12. Software emulation?The reason Time Spy utilized DX12 11_0 is because that is the base DX12 feature levels that most cards support, and that all current DX12 games actually utilize. I'm sure they can enable 12_1 but then most AMD cards won't be able to run the benchmark. Should I claim the benchmark is now bias to AMD because of that?

The thing that strikes me as odd is that DX11 also uses FL 11 as the highest level. You could give Time Spy DX11 and it would work just fine, but you wouldn't get the benefits of Async Compute. For a benchmark tool, having Dx11 option would be useful, because realistically all games will have DX11/DX12 options. I'm not the only one who thinks Time Spy is a DX11 game with DX12 features thrown at it.You are free to consider DX12 11_0 to be very near to DX11 but you would be wrong. The higher feature levels just include additional features on top of the DX12 base, DX12 11_0 brings all the advantages that DX12 as a whole over DX11.

But the main issue for Time Spy is their choice of Pre-emption Compute which is less Async Compute like and doesn't benefit AMD as much. Time Spy is just not making good use of Async Compute. But again, why not make more than one code path to benefit Nvidia and AMD, to maximize performance?

Compute queues as a % of total run time:

Doom: 43.70%

AOTS: 90.45%

Time Spy: 21.38%

http://www.overclock.net/t/1606224/v...#post_25358335

It is my opinion. I'm just usually right about outcomes. Feel free to tell me I'm wrong once Nvidia fixes Doom's Async problem. I'll be waiting. No I won't.I... what? How on earth did you come to this conclusion at all? You just literally made shit up! Your opinion =/= fact.

"Dur, it's been 1 month since Doom came out and Vulkan is not out yet, Doom will never have Vulkan. It would have been done by now."

The Ashes of Singularity devs said the same thing, but nothing so far. I'm going by history and how many games has Nvidia "fixed" Async Compute? Is this going to be a theme now, where Nvidia owners have to wait for a fix months later? DX12/Vulkan/Async Compute aren't new things. These are standards they have been worked on for years, and Nvidia was involved in the development process. They had more than enough time to get proper working drivers for these features, just like AMD has. Is each DX12/Vulkan game that's released going to go through this?All issues that the dev has stated they will be fixing in a future patch. How on this green Earth did that lead you to your conclusion? Christ you are not even trying to hide your bias now.

My bias is with the consumer, and if Nvida screwed up then they should own up to it. Time Spy does not represent how DX12 games currently work, and will work. Time Spy doesn't use parallel computing, and is a bad representation of DX12 games.

Explanation of settings used. Not everyone uses max settings in their benchmarks, and not everyone explains it. The person who made that is very precise.DX12 mode, maxed in game settings?

Thread: Gtx 1080

-

2016-07-26, 10:36 PM #1921

-

2016-07-26, 10:48 PM #1922

If it's in Doom for AA, why does it still work with no AA? How do you know it's being used for AA in this case?

The quote literally spells it out for you:Has said nothing about going on a per SM level. And since you used Anandtech, then go look at the next page.

It's something that's for AC also. Preemption is extremely broad, yes, but it is something that can and is being used for AC as a bandaid solution. Even noted by AMD a year ago.

https://youtu.be/v3dUhep0rBs?t=96

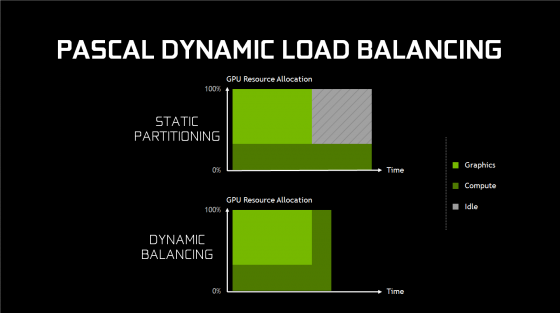

It's a Async feature, but is not used to improve concurrency, that is what Dynamic Load Balancing is for, allowing any idle resources (in this case SM units) to switch between compute and graphics as needed. Something that was not available on Maxwell."Before we start, in writing this article I spent some time mulling over how to best approach the subject of fine-grained preemption, and ultimately I’m choosing to pursue it on its own page, and not on the same page as concurrency. Why? Well although it is an async compute feature – and it’s a good way to get time-critical independent tasks started right away – its purpose isn’t to improve concurrency.

It's in the goddamn whitepaper (which you said you read):

http://international.download.nvidia...aper_FINAL.pdf

That is referring to the SM unit's. Another write up about it:For overlapping workloads, Pascal introduces support for “dynamic load balancing.” In Maxwell generation GPUs, overlapping workloads were implemented with static partitioning of the GPU into a subset that runs graphics, and a subset that runs compute. This is efficient provided that the balance of work between the two loads roughly matches the partitioning ratio. However, if the compute workload takes longer than the graphics workload, and both need to complete before new work can be done, and the portion of the GPU configured to run graphics will go idle. This can cause reduced performance that may exceed any performance benefit that would have been provided from running the workloads overlapped. Hardware dynamic load balancing addresses this issue by allowing either workload to fill the rest of the machine if idle resources are available.

http://www.eteknix.com/pascal-gtx-10...pute-explored/

But as Anandtech notes since Maxwell (and Pascal) are such efficient GPU architectures gains would be minimal:This GPU level means that once a graphics/compute task is finished, the idle SMs can now be added to those working on another graphics/compute task, speeding up completion. This should allow for much better async compute performance compared to Maxwell.

It pans out with Time Spy perfectly, AMD gains over 10% while Nvidia is roughly half of that. Part of the reason why Nvidia gets so little gains transitioning to DX12, the GPU's were already pretty efficient under DX11 whereby AMD was not.Right now I think it’s going to prove significant that while NVIDIA introduced dynamic scheduling in Pascal, they also didn’t make the architecture significantly wider than Maxwell 2. As we discussed earlier in how Pascal has been optimized, it’s a slightly wider but mostly higher clocked successor to Maxwell 2. As a result there’s not too much additional parallelism needed to fill out GP104; relative to GM204, you only need 25% more threads, a relatively small jump for a generation. This means that while NVIDIA has made Pascal far more accommodating to asynchronous concurrent executeion, there’s still no guarantee that any specific game will find bubbles to fill. Thus far there’s little evidence to indicate that NVIDIA’s been struggling to fill out their GPUs with Maxwell 2, and with Pascal only being a bit wider, it may not behave much differently in that regard.

Meanwhile, because this is a question that I’m frequently asked, I will make a very high level comparison to AMD. Ever since the transition to unified shader architectures, AMD has always favored higher ALU counts; Fiji had more ALUs than GM200, mainstream Polaris 10 has nearly as many ALUs as high-end GP104, etc. All other things held equal, this means there are more chances for execution bubbles in AMD’s architectures, and consequently more opportunities to exploit concurrency via async compute. We’re still very early into the Pascal era – the first game supporting async on Pascal, Rise of the Tomb Raider, was just patched in last week – but on the whole I don’t expect NVIDIA to benefit from async by as much as we’ve seen AMD benefit. At least not with well-written code.

Frankly this entire debate is utterly pointless and I'm leaving it, no matter how many graphs or quotes or articles I link you continue to ignore everything I bring up and generally make claims without anything to actually prove your point - I'm not the first one noting this either as Mater Guns noted on the previous page. You're debating in bad faith and outright claim things that are not true, even after I've linked something that proves otherwise!

I'm confident that if another benchmark or game were to come out that Nvidia performs well on (not winning mind you, the Geforce 1060 dares to be equal to a RX480 in Time Spy) then it's obviously a flawed benchmark or Nvidia bought them out, or some other conspiracy theory that would be kept spreading by so-called "forum experts". Hell I'm not even hating on AMD here, they have a competitive DX12 architecture that has basically closed the gap between them and Nvidia (and frankly surpassed Maxwell), if Vega was out and faster then the 1080 I would have bought it in a heartbeat, alas it is not and I really couldn't wait almost a year for it.

Feel free to bask in your "victory".

- - - Updated - - -

Time Spy uses parallel computing, I've actually linked stuff proving this. Feel free to keep ignoring it. It gets very similar results to the only other engine that was built from the ground up for DX12 (Ashes). Pascal literally just launched a couple months ago, we have had only 3 releases/patches in that time frame. Doom, Time Spy, and Rise of the Tomb Raider.

Out of those 3, 2 support Async on Pascal while the other is working on a patch. Even Ashes of the Singularity (which has no specific patch to enhance Pascal performance) gets a boost out of DX12 and Async on Pascal:

Sigh, I'm actually done with you as well, debating someone who actually said "It is my opinion. I'm just usually right about outcomes." is pointless. It's basically the equivalent of debating Trump.

-

2016-07-26, 11:06 PM #1923

Because for AC you need to synchronize things with fences, and in if AA is on that list, it has to be programmed specifically with certain AA or not even be in there.

In your etenixThe quote literally spells it out for you:

It's a Async feature, but is not used to improve concurrency, that is what Dynamic Load Balancing is for, allowing any idle resources (in this case SM units) to switch between compute and graphics as needed. Something that was not available on Maxwell.

It's in the goddamn whitepaper (which you said you read):

http://international.download.nvidia...aper_FINAL.pdf

[IMG]http://gfxspeak.com/wp-content/uploads/2016/05/load-balancing-e1463555785958.png[IMG]

That is referring to the SM unit's. Another write up about it:

http://www.eteknix.com/pascal-gtx-10...pute-explored/

Even with all of these additions, Pascal still won’t quite match GCN. GCN is able to run async compute at the SM/CU level, meaning each SM/CU can work on both graphics and compute at the same time, allowing even better efficiency.And frankly that's the misconception of asynchronous compute. It's called Multi-engine in documentation for a reason.But as Anandtech notes since Maxwell (and Pascal) are such efficient GPU architectures gains would be minimal:

It pans out with Time Spy perfectly, AMD gains over 10% while Nvidia is roughly half of that. Part of the reason why Nvidia gets so little gains transitioning to DX12, the GPU's were already pretty efficient under DX11 whereby AMD was not.

Sure... I don't really care about victory. I only care about how things work and tech improvement. Benchmarks, are dumb, period. They serve no gaming purpose in the world.Frankly this entire debate is utterly pointless and I'm leaving it, no matter how many graphs or quotes or articles I link you continue to ignore everything I bring up and generally make claims without anything to actually prove your point - I'm not the first one noting this either as Mater Guns noted on the previous page. You're debating in bad faith and outright claim things that are not true, even after I've linked something that proves otherwise!

I'm confident that if another benchmark or game were to come out that Nvidia performs well on (not winning mind you, the Geforce 1060 dares to be equal to a RX480 in Time Spy) then it's obviously a flawed benchmark or Nvidia bought them out, or some other conspiracy theory that would be kept spreading by so-called "forum experts". Hell I'm not even hating on AMD here, they have a competitive DX12 architecture that has basically closed the gap between them and Nvidia (and frankly surpassed Maxwell), if Vega was out and faster then the 1080 I would have bought it in a heartbeat, alas it is not and I really couldn't wait almost a year for it.

Feel free to bask in your "victory".

And that you would buy it in a heart beat... quite honestly, don't bother with those statement. Everything you've posted has either been heavily favored for Nvidia or against AMD. I know where my bias is, and that's quite honestly a hater. I've seen these posts way too many times to see that they would never have considered the other side after they made these posts.

-

2016-07-27, 12:40 AM #1924

This thread is a mess. You can't prove it, just like I can't prove it, because we don't have access to the code. What we know is that Time Spy has far less Compute queues compared to Doom and Ashes, which makes it a very poor Async Compute test. Feel free to interpret this image of Doom, Ashes, and Time Spy and how they queue up data for a R9 390, cause I can't make heads or tails of what's going on here.

http://i.imgur.com/s51q4IX.jpg

According to this link, the Pascal cards don't actually benefit at all in DX12/Vulkan games.It gets very similar results to the only other engine that was built from the ground up for DX12 (Ashes). Pascal literally just launched a couple months ago, we have had only 3 releases/patches in that time frame. Doom, Time Spy, and Rise of the Tomb Raider.

Out of those 3, 2 support Async on Pascal while the other is working on a patch. Even Ashes of the Singularity (which has no specific patch to enhance Pascal performance) gets a boost out of DX12 and Async on Pascal:

https://docs.google.com/spreadsheets...WVo/edit#gid=0

Cherry picking benchmarks doesn't prove a point, cause everyone's scores are all over the place. Out of the websites that tested both DX11 and DX12, the 1060 has gained no increase in performance. Some show the RX 480 faster, and some slower. On average the 1060 is 1.56% in Ashes, but not because it benefits more from DX12.

Ars Tecnica DX11=45 DX12=45

Gamers Nexus DX11=45.32 DX12=46.4

Lan OC DX11=38 DX12=35.5

TechSpot DX11=59 DX12=58

Hardware Unboxed DX11=48 DX12=46

On average the 1060 gains nothing from DX12. That's all within the margin of error.

https://docs.google.com/spreadsheets...WVo/edit#gid=0

Unless someone here is a software engineer who actually codes in Vulkan and DX12, everything so far is just opinion. An educated opinion, but nothing more. You know for certain that Nvidia will have Async Compute because their coding engineers are working with the hands of God to find those extra frames per second. That's the same rhetoric they gave with Ashes and Maxwell, and you still believe them. The only way this would work is if the game engine were retooled in favor on Nvidia, which means far less compute load. This is basically the tessellation issue with AMD, but now it's the Async Compute issue with Nvidia. Except we aren't getting crap like this from AMD.Sigh, I'm actually done with you as well, debating someone who actually said "It is my opinion. I'm just usually right about outcomes." is pointless. It's basically the equivalent of debating Trump.

There's a lot more politics involved than engineering with Doom, as I'm sure Nvidia is trying to convince game developers to make their games a certain way. Ashes and Doom are probably not going to budge from this, which is why you'll never see AC "fixed". Cause really, how hard is it to get this working properly? AMD has terrible drivers right? Why is their Vulkan and DX12 drivers working fine? This is just simple elementary stuff.

-

2016-07-27, 12:52 AM #1925

I'm done with the entire debate and I'm not going to address the rest of your points, but a hater? Come on now, no need to make up falsehoods, I've never once hated on AMD, but feel free to look at my post history in this thread to try and find it. All I've done is defend Nvidia and Futuremark against some of the really dumb things mentioned in this thread. At most I'm disappointed that the gap to Vega is so long and that the efficiency of Polaris is not as good as it could be. Oh and that AMD cards don't support the highest feature levels of DX12. I've even suggested in other threads that a AIB Radeon RX480 and Geforce 1060 are both good buys, oh and that AMD has done very well in DX12 and gets more out of Async then Pascal does.

I've always bought what has been the best performing card that I could afford at the time, my recent card history has been a Geforce 1080, Geforce 980, Radeon 7970, Radeon 6970, Geforce 9800 GX2, Radeon x1900 and Radeon x800. I tend to buy every two years or so. When 2018 rolls around I'll see what the best card I can get for $500-$800 and buy it, I really don't give a damn be it AMD or Nvidia.

Defending Nvidia =/= hating AMD. But feel free to attack me on something I haven't even done.

-

2016-07-27, 01:00 AM #1926

-

2016-07-27, 02:07 AM #1927

-

2016-07-27, 04:18 AM #1928

I personally couldn't care about Vega as well as the new Titan X. Well out of reach of the normal consumer. Showcase cards like buying a Ferrari.

I agree but it is a cheap graphics card, and the inefficiency is minor unless you plan to Bit Coin farm with these cards?and that the efficiency of Polaris is not as good as it could be.

Also minor, since AMD cards seem to benefit the most from DX12.Oh and that AMD cards don't support the highest feature levels of DX12.

Defending Nvidia on DX12/Vulkan is not useful to consumers. It'll make Nvidia owners feel better about their purchases, but not much else. How are consumers rewarded? Founders Edition priced cards? Fixing Async Compute months later, if even?Defending Nvidia =/= hating AMD. But feel free to attack me on something I haven't even done.

As for 3Dmark Time Spy, I really hate synthetic tests. It's nice for a quick test, but no real value when comparing products to buy. Right now, benchmark results per game really depends if AMD or Nvidia was deeply involved in the development process of the game. I would say Doom is the most neutral game, cause it's known that Nvidia had some involvement with Doom. As you said they're still working to get Async Compute running.

I really miss John Carmack, cause he would tell it as it is, and he's no fan of ATI/AMD. Sadly, he's in Oculus hell now. Could really use someone like him who's not biased, to speak about these API's and how they work for AMD and Nvidia. Sadly, the only thing he cares about Vulkan is that it runs on Android.

Oh and Nvidia's Tegra chip is in Nintendo's NX console. Thought that was big news.

-

2016-07-27, 05:04 AM #1929

If it's Tegra X1, nothing to write home about. Nvidia has been constantly losing in the mobile department to Qualcomm and Samsung.

-

2016-07-27, 06:14 AM #1930

Ah, the good ol' "I'm not hating AMD, I'm just going to actively try and disrupt any conversation that could possibly benefit AMD at all".

-

2016-07-27, 06:19 AM #1931

-

2016-07-27, 07:10 AM #1932

Got my GTX 1070 yesterday, tested it for a bit with the Stock Cooler and then slapped the Arctic Accelero Xtreme 4 on it.

Ran Unigine Heaven Ultra for 30 minutes.

Stock 80C @ 70% Fan Speed.

Arctic 48C @ 33% Fan Speed.

Right now it's doing 1936 MHz on it's own, which is pretty nice.

(upgraded from a 4 years old GTX 680)

-

2016-07-27, 01:13 PM #1933

-

2016-07-27, 08:05 PM #1934

Why would Nvidia stand to gain much from DX12 when their DX11 driver optimization is already that good.. AMD cards have generally had a great performance advantage when reading raw GLOPS values, yet they fell behind when benchmarking in actual games. Also likely the reason to why a 290X walks over the GTX 780Ti today while they were neck in neck during release.. DX11 drivers could be improved upon greatly and then was improved upon, while Nvidia had no leeway in that area.

DX12 (Mantle) seem based upon the idea from AMD that a low level API would help their own cards greatly as they haven't had the resources to in-time optimize at the high level.

Ie any DX12 or Vulcan benchmark result with the RX 480 is probably a nice pointer of where that card will stand performance-wise in a year or two. Probably also in some DX11 titles as the drivers mature.

-

2016-07-27, 09:24 PM #1935

Again, not a driver optimization issue. AMD has hardware since GCN 1.0 that was never exposed because DX11 went on far longer than AMD had anticipated. But honestly, Nvidia doesn't need to go crazy on DX12/Vulkan cause their cards perform very well. The problem begins when Nvidia makes promises on potential for improvement, when it clearly won't happen. Pascal cards are just not going to get much faster with DX12/Vulkan.

Most likely AMD decided to make tweaks per game, by trying to utilize that dormant hardware that couldn't be utilized otherwise. Not something a consumer wants when your performance is dependent on driver updates.Also likely the reason to why a 290X walks over the GTX 780Ti today while they were neck in neck during release.. DX11 drivers could be improved upon greatly and then was improved upon, while Nvidia had no leeway in that area.

All new games being released are going to be DX12/Vulkan games. And vast majority of DX12 titles in 2016 are partnering with AMD. Any games that haven't announced DX12/Vulkan, could still have this feature.Ie any DX12 or Vulcan benchmark result with the RX 480 is probably a nice pointer of where that card will stand performance-wise in a year or two. Probably also in some DX11 titles as the drivers mature.

Final Fantasy XV dx12 September 30th

Civilization VI dx12 October 21st

Battlefield 1 dx12 October 21st

Deus Ex Humanity at War August

BTW, AdoredTV did a Maxwell vs Pascal to see if he's right that Pascal is just Maxwell 2.0. No surprise, but yes.

-

2016-07-27, 09:36 PM #1936

So Maxwell 3.0, with slightly lower IPC but way higher clocks, loses to Maxwell 2.0 when they're compared with the same clocks? And performs exactly the same when they're run with the same theoretical TFLOPS (due to different arrangement of the two chips used)?

How didn't anyone think about this before? /sarcasm

/sarcasm

Seriously... This isn't anything unexpected. We've been telling this for a while now, the uarch diagram doesn't lie. They're basically the same thing.

-

2016-07-27, 09:55 PM #1937

You're both saying it's not a driver issue then dressing the driver updates as something else than just a driver issue?

At the end of the day AdoredTV is basically a forum "talker" like the rest of us, but with a youtube channel. He has no deep insight or knowledge of the architectures and hasn't exactly been spot on in his predictions so far. Definitely a subscriber but everything must be consumed with a grain of salt. For this comparison to be relevant, he should first of have disabled Turbo properly through a vBIOS flash, and then ran a myriad of more benchmarks. Also, he for some reason came up with a new rule that advancements in clock speed must be a result of changes in process node. Architecture changes can have a major impact on what clock speeds you can achieve. That both "architectures" (possibly) have the same performance at the same clock speed isn't saying it all.BTW, AdoredTV did a Maxwell vs Pascal to see if he's right that Pascal is just Maxwell 2.0. No surprise, but yes.

https://www.youtube.com/watch?v=nDaekpMBYUA

-

2016-07-27, 10:22 PM #1938

Equal clocks favored the 980 Ti, cause it has more cores. So he had to equal the flops by doing math. This isn't really a bad thing on Nvdia's end, cause they are obviously putting more effort elsewhere. Simple tweaks to Maxwell with 16nm clock boost is enough to keep Nvidia ahead in the market, while they work on Tegra or something better than Pascal. Also good for AMD cause they have time to develop for better efficiency, which they need, cause if anything better was released I don't see AMD being relevant in the market.

Of course it could also be that Nvidia was so busy working on pet projects like Tegra, that they didn't have the engineering time to improve on their desktop GPUs and just farted out Pascal to keep market dominance.

- - - Updated - - -

That's right, cause it's an API issue. You can't add hardware features without the API able to expose it. So I think AMD tried tweak their drivers by trying to make Async Compute work on DX11 games.

You can see this with Linux drivers, as AMD's drivers are far worse than Nvidia's, even compared to Nouveau given that you can reclock the cards. But Vulkan would properly expose that hardware. You can add all the features you want on a graphics card, but without a proper API to expose it, it might as well just be sitting there eating electricity.

A die shrink is usually for higher clocks, though nowadays it's more for lower power consumption. Equal performance for equal clock shows no actual increase in efficiency between Pascal and Maxwell.Also, he for some reason came up with a new rule that advancements in clock speed must be a result of changes in process node. Architecture changes can have a major impact on what clock speeds you can achieve. That both "architectures" (possibly) have the same performance at the same clock speed isn't saying it all.

-

2016-07-27, 11:22 PM #1939

Is this supposed to be news? I even noted as much in a previous post:

Yes, when it comes to DX11 games you are going to see very little improvement over Maxwell (assuming TFLOP is equal). The advantages of Pascal are in the much higher clock speeds, better power efficiency and the new technologies added that can benefit DX12 and VR.

He is also not actually doing an accurate comparison; the 980ti is a 96 ROP card and is going to benefit in heavy render output situations (high AA and higher resolutions), a better comparison would have been to use a 980 and 1070/80 at the same TFLOP rating as they are both 64 ROP cards and it would have been a more direct comparison.

I would have liked to see a Ashes comparison as well. As the 980 gains nothing from DX12+async while the 1080 does.

-

2016-07-30, 06:39 PM #1940

wow interesting read the last couple of pages..... should make time to read more often and play less games lol.

Hats off to you Zenny.

Recent Blue Posts

Recent Blue Posts

Recent Forum Posts

Recent Forum Posts

Notable Differences Between Cataclysm Classic 4.4.0 and Original Cataclysm 4.0.3a

Notable Differences Between Cataclysm Classic 4.4.0 and Original Cataclysm 4.0.3a Did Blizzard just hotfix an ilvl requirement onto Awakened LFR?

Did Blizzard just hotfix an ilvl requirement onto Awakened LFR? Premades Epic Battleground

Premades Epic Battleground MMO-Champion

MMO-Champion

Reply With Quote

Reply With Quote