Its aimed at graphic designers and such.

Thread: Let's Talk about 4k resolution.

-

2013-02-02, 03:37 AM #21Stood in the Fire

- Join Date

- Jul 2011

- Posts

- 460

-

2013-02-02, 03:46 AM #22

4k video in and of itself is not that big of a deal, the reason we have to wait for so long is more a matter of development of practical codecs for the format, and storage/bandwidth issues. If you were to own a digital 4k copy of The Hobbit (which was filmed in 4k @ 48fps, so twice the data just in FPS alone. It was also filmed in stereo, so, actually 4 times the data /agonize ), it'd take up a humongous amount of space.

4k is not actually a particularly high resolution, it's only around 8-9 Megapixels, which is less than almost any decent snapshot camera. And it's completely dwarfed by medium format digital cameras, or the majority of DSLR's or Astronomical/Industrial/Medical CCD/CMOS cameras. The problem is motion picture (i.e. video), and the data rates. For example an astrophotography CCD might create 16bit raw files in 4096x4096 resolution, which will clock in at something like 32MB per image. Times 30 FPS, and you're close to a gigabyte per sec! Obviously 16bit data is completely impractical for common use, most screens can only display 8 bits of depth (Per subpixel), but still.

H.264 did a great deal of work to make 720 and 1080p as accessible as it is now, and Cisco is working on H.265 now which is at least in some circumstances going to halve the bandwidth. So, a 720 stream at 30 FPS might be asking for as little as 0.5Mbits/s. I think standards like H.265 is going to need to be in-place before we see 4k or related resolutions becoming easily accessible or even mainstream.

Personally I'm more excited about higher pixel density rather than large screens. I've never wanted, and doubt I ever will want, a large screen. A 4k screen close up is not going to appear any sharper than a 1080p screen at a distance - spatial resolution. This is why I often argue that 27" screens for gaming etc is a complete and utter idiocy - you sit close to a computer screen, meaning that 27" screen is going to look poop versus a 21/22" screen, due to pixel size, number, and distance.

A 22" screen (Gives a great field of view for me personally, so I prefer them), with ~4k resolution? Now that I would be interested in.Last edited by Mythricia; 2013-02-02 at 03:56 AM. Reason: More thoughts

I don't know half of you half as well as I should like, and I like more than half of you more than you deserve.

-

2013-02-02, 03:52 AM #23

-

2013-02-02, 04:06 AM #24I don't know half of you half as well as I should like, and I like more than half of you more than you deserve.

-

2013-02-02, 04:12 AM #25

24" is to me the optimal size, I feel that 21" is a bit too small and 27" makes me want to move my head around to look in all the corners.

Intel i5-3570K @ 4.7GHz | MSI Z77 Mpower | Noctua NH-D14 | Corsair Vengeance LP White 1.35V 8GB 1600MHz

Gigabyte GTX 670 OC Windforce 3X @ 1372/7604MHz | Corsair Force GT 120GB | Silverstone Fortress FT02 | Corsair VX450

-

2013-02-02, 04:19 AM #26

Hey higher ftw.

I personally have an Acer S211HL as my main display, which is a 21.5" (I think that's the actual size of the viewable screen, may be wrong) and it has awesome pixel density, but still, a 1440p monitor is good just for the sake of more retail space to work.

-

2013-02-02, 04:37 AM #27Field Marshal

- Join Date

- Apr 2012

- Posts

- 77

-

2013-02-02, 04:37 AM #28

Definitely, the extra space is really nice. At the moment I'm using a 1680x1050 screen myself (I like the 16:10 format, but the rest of the world seems to like 16:9!), so I'm on the opposite end, it can get cramped.

The difference is just that when you downscale a compressed high-resolution material (such as YouTube 4k) to a lower resolution, the result usually is perceived as sharper, due to the fact normal YouTube footage will show more of it's compression artefacts. Watching say, 720p from YouTube, many of those pixels will be blurred or deformed slightly, as is the nature of compression. But when you downsample 4k video to fit on a 1440x900 screen, those compression artefacts kind of get lost. It's still only 1440x900 though, yeah.

It can potentially look absolutely awful as well - if you're unlucky, the amount of video pixels being crammed into 1 screen pixel will end up in a sort of mathematical odds - and you get weird artefacts and it just looks horrible. The image becomes aliased.Last edited by Mythricia; 2013-02-02 at 04:44 AM.

I don't know half of you half as well as I should like, and I like more than half of you more than you deserve.

-

2013-02-02, 04:40 AM #29Legendary!

- Join Date

- Aug 2011

- Posts

- 6,684

-

2013-02-02, 04:45 AM #30Intel i5-3570K @ 4.7GHz | MSI Z77 Mpower | Noctua NH-D14 | Corsair Vengeance LP White 1.35V 8GB 1600MHz

Gigabyte GTX 670 OC Windforce 3X @ 1372/7604MHz | Corsair Force GT 120GB | Silverstone Fortress FT02 | Corsair VX450

-

2013-02-02, 05:06 AM #31

-

2013-02-02, 05:12 AM #32

im on a 2880×1800 retina display, and its quite something, for wow and for work. But more on topic, Japan already announced moving anime production to the 4x model by 2014, with all anime broadcast to be in 4x by the end of 2015.

-

2013-02-03, 04:01 PM #33

I could sit all of you down 10ft from 1080p tv and a 4k tv and none of you could tell me which one was which.

NZXT Phantom

ASRock Extreme4 Gen3 | I5 2500k | EVGA GTX 560 TI

8gb Corsair Vengence | 60gb Agility3 SSD | 1tb Seagate 7200rpm

-

2013-02-03, 04:28 PM #34Intel i5-3570K @ 4.7GHz | MSI Z77 Mpower | Noctua NH-D14 | Corsair Vengeance LP White 1.35V 8GB 1600MHz

Gigabyte GTX 670 OC Windforce 3X @ 1372/7604MHz | Corsair Force GT 120GB | Silverstone Fortress FT02 | Corsair VX450

-

2013-02-03, 05:32 PM #35

This is taken directly from C Net:

"The eye has a finite resolution:

This is basic biology. The accepted "normal" vision is 20/20. In response to my previous articles on the stupidity of 4K TVs, many people argued they had better vision, or some other number should be used. This is like arguing doors should be bigger because there are tall people. Also, just because you have better vision, doesn't mean most people have better vision. If they did, it wouldn't be better, it would be average.

Try this. Go to the beach (or a big sandbox, or a baseball diamond). Sit down. Start counting how many grains of sand you can see next to you. Now do the same with the grains of sand by your feet. Try again with the sand far beyond your feet (like, say, 10 feet away). The fact that you can see individual grains near you, but not farther away is exactly what we're talking about here. The eye is analog. Randomly analog at that. So of course some people are going to see more detail than others, and at different distances, but 20/20 is what everyone knows, and it is by far the most logical place to start any discussion.

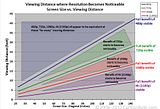

Is there some wiggle room thanks to variances in how people see? Yes, of course. Here's an awesome chart:

Let's skip ahead a step. Getting bogged down in the specifics misses the big picture. The eye does have a finite resolution, and if you want to argue it's better than 20/20, you're still conceding the point. You're just saying that smaller 4K TVs are viable. How much smaller? Well, not 50 inches. Probably not 60 inches, either. These are the sizes people are buying. Most people are buying even smaller TVs. Which leads to..."

The rest of the explanation is available here: http://reviews.cnet.com/8301-33199_7...-still-stupid/

-

2013-02-03, 07:05 PM #36Stood in the Fire

- Join Date

- Jun 2009

- Posts

- 425

Even though if that article is scientifically correct (which I doubt), one would still be able to take full benefit of 30 inch 4k resolution monitor if sitting 1 meter away.

Intel Core i5 2500k @ 4.7GHz | MSI GTX 980 Gaming 4G x2 in SLI | ASRock Extreme3 Gen3 Motherboard

8 GB of Kingston HyperX DDR3 | Western Digital Caviar Green 1 TB | Western Digital Caviar Blue 1 TB

2x Samsung 840 Pro 128 GB + Corsair Force 3 120 GB SSDs (three-way raid 0)

Cooler Master HAF 912 plus case | Corsair AX1200 power supply | Thermaltake NiC C5 Untouchable CPU cooler

Asus PG278Q ROG SWIFT (1440p @ 144 Hz, GSync + 3D vision)

-

2013-02-03, 08:19 PM #37

Well, that is what is says. The point is TV based, not about smaller, desk based monitors. As I've said before 4k's main selling point will be the smaller screen market - in that 1-5ft range, it's pretty much perfect. For across the room viewing though, there's little to recommend a 4k 40~50" TV over a 1920x1080 one.

-

2013-02-03, 08:36 PM #38

no, it doesn't

lets start with what resolution is, it is the number of pixels resolved in a limited area, it specifically has nothing to do with pixel size or pixel density

4k on a 100ft screen will still look as bad as 1080 on a 5 ft screen, if you want to defeat the eye, you don't need to look at the sharpness of a persons vision, or the resolution, you need to look at the pixel size and pixel density

a perfect screen would have a pixel that is about 90 microns across with less then 5 micron gap between pixels, this means a screen needs a pixel per square inch count of 71,486, or 267.3PPI

that means that a 60" flatscreen needs a resolution of 13964 x 7850, and that's only for a 60", a 70" screen needs a resolution of 16287 x 9158, which is much higher than the 4k or 8k resolution shown on sharps 84" screens at CES

it's not that this is impossible, we have smartphones and tablets that approach or exceed 267PPI, but the thing to remember is that you need to increase the resolution with the screen size to maintain the PPI

next time you quote an article, make sure it's not an editorial article written by an idiot who skipped biology classLast edited by Cyanotical; 2013-02-03 at 08:46 PM.

-

2013-02-03, 08:53 PM #39Bloodsail Admiral

- Join Date

- Aug 2011

- Posts

- 1,111

As an owner of the first laptop which comes close to the 4k resolution, I wouldn't want to go back ever again. It makes working with text (writing papers and programming) SO much more pleasant! However, there are number of challenges to solve until this can become widespread.

For 4k to become mainstream we need proper working resolution independence support for all mainstream OS. Without resolution independence text is pretty much unreadable on a native resolution of such a monitor. And here where it becomes problematic. Windows actually has some good resolution independence - in the .NET framework. Most 'legacy' win32 programs will be in big trouble though. Apple has a very good working high-quality implementation, but there are still some minor performance quirks. The nice thing of Apple's implementation is that it works for lots of legacy programs as well - because they designed the OS with resolution independence in mind from the start. Linux... well, there seems to be a branch for resolution-independent GKT+, but I have no idea whether it is actually working. De-facto Linux appears to be miles behind the other two.

P.S. Proper HiDPI content looks MASSIVELY better on a HiDPI screen. Especially pictures. That level of detail is astonishing!Last edited by mafao; 2013-02-03 at 08:55 PM.

-

2013-02-03, 09:01 PM #40

a retina macbook is not close to 4k, 2560x1600 and the fun 1440x900 with double pixels are not really even that close:

Recent Blue Posts

Recent Blue Posts

Recent Forum Posts

Recent Forum Posts

Do you consider the Horde to be "the bad guys" or is it more complex?

Do you consider the Horde to be "the bad guys" or is it more complex? Did Blizzard just hotfix an ilvl requirement onto Awakened LFR?

Did Blizzard just hotfix an ilvl requirement onto Awakened LFR? MMO-Champion

MMO-Champion

Reply With Quote

Reply With Quote